- Red Hat Community

- :

- Discuss

- :

- Platform & Linux

- :

- Zombie Processes

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.2K Views

Hi Folks,

I was lucky enough to experience this:

A CentOS 7.x Box with 4748 Zombie Processes. The uptime was about a year.

#ps output has shown that those processes are in Z or Zs State (<defunct>).

And those processes have been adopted by systemd (PID 1).

Here are two myths to burst about Zombie/Orphan Processes.

Myth 1.0

It won't take any resource/s on the system other than a process table entry.

> It sure does. Something is checking up on something or penalizing something.

> My system cannot even respond to systemctl calls.

> Had to issue this #systemctl --force --force reboot

Myth 2.0

The result is that a process that is both a zombie and an orphan will be reaped automatically.

> automatically but how long do we have to wait? Seems like forever and in my case PPID is PID1 (systemd) and PID1 is not sucidal.

Your thoughts? Insights?

Thanks and regards,

Will

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.1K Views

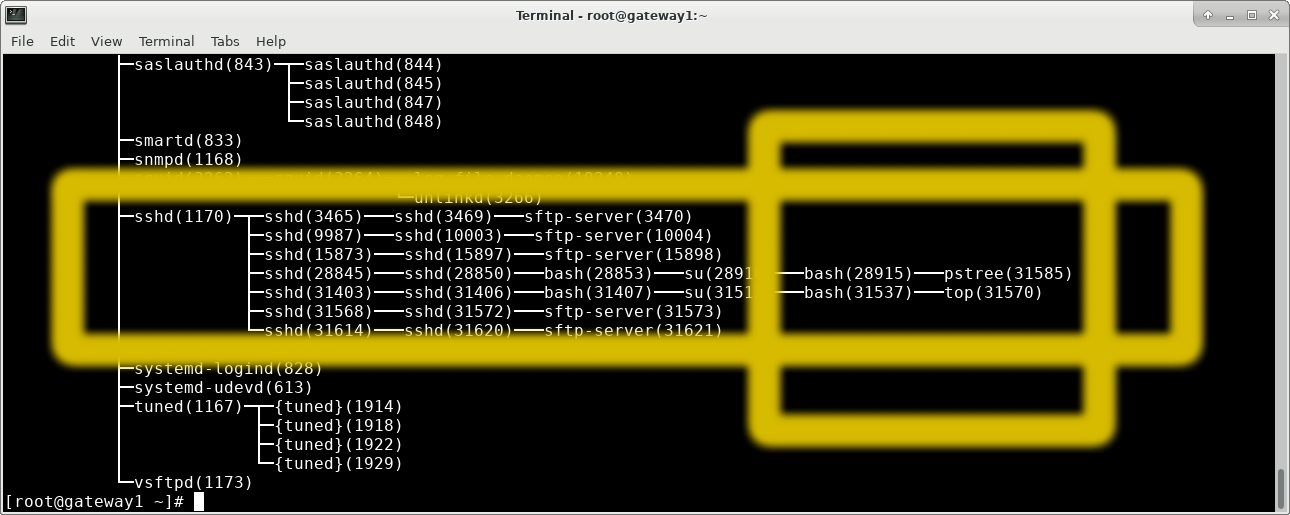

From the list, it looks like you might have a bunch of stuff mid-flight when the connection was disrupted? SSH is usually pretty good about resuming a connection. Alternatively, it could be whatever is interacting with sftp-server. sftp connections are recieved by sshd, but then passed to sftp-server processes to be handled. those sftp-server processes (and possibly the sshds tied to them?)

The real root cause may be that 361 day uptime though. I know that there have been kernel, systemd, and ssh updates produced within the last year. Even though you may have applied those to the system, it's clear that the system has not been restarted. At the very least, you're running a kernel which is unpatched compared to the most recent one [hopefully] installed on the box. I've seen situations where odd behavior occurs when the underlying software is updated, but the running daemons not restarted to utilize those updated binaries. For example, if you've been running systemd for 361 days, but an update to systemd updated a library, which is then loaded into your older, running systemd daemons, what happens? Is the older systemd daemon able to just roll with that library, or is something about it changed in such a way that it causes inconsistencies or, if you want the technical term, wonkiness, with your running systemd?

Whenever I see a system with a really large uptime value, I cringe. I mean, on the one hand, it's cool that the box has been runing that long without issue, but on the other, it means that updates (like kernels) haven't been completed. I would expect well cared for systems to max out at about 100 days of uptime. Anything beyond that will typically set off my spidy senses.

-STM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.1K Views

Hey, @williamwlk,

Typically, when you see Z processes, it's not them that are the issue - it's the process that created them.

Every parent process that does not at some point either double-fork-and-exit or wait() for its children, is a bad parent and should be reported to social services.

All of what you say is true though. It depends on what the reason for Z processes entering that state is, and of course, systemd is just the last resort at adopting orphans. If the parent process of those zombies exit()ed without wait()ing for them, they will have to be reaped by systemd, and who knows how often that happens, if at all.

I suggest you figure out who the parent creating those zombies is and strace it to see why this happens. Then you can talk to the developers in charge of it and make them do the right thing.

Cheers,

Grega

[don't forget to kudo a helpful post or mark it as a solution!]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.1K Views

Hi @oldbenko

Thank you for your suggestion and I have indeed collected a lot of data before rebooting the system.

The nature of this system is a gateway jump server and/or an sftp server being mainly accessed over a 3G data connection.

One scenario:

PuTTY Client > 3G Data > Server (sshd,sftp-server) > bash > top or ssh

There are NO 3rd party software or custom built apps on the system and end users are relying on the native applications and thus, the most offending bits are such applications.

Here is our quick reference to that and those undead app instances such as bash, top,ssh, sshd, sftp-server, are being adopted by systemd but systemd won't bother reaping or slaying them.

My humble opinion is that they all loose their immediate parents suddenly and have to be adopted by SYSTEMD as the last resort as you have pointed out.

I think systemd as a good parent should have been more efficient dealing with those undead children. :)

Merry X'mas and Happy New Year to you and all here.

Regards,

Will

[williamwlk@mysandbox zombie]$ cat top-defunct.txt | grep Z | awk '{print $12}' | sort | uniq -c | sort -k1 -n

1 dbus-launch

1 gnome-keyring-d

1 less

1 sudo

1 systemd-logind

2 awk

3 grep

4 more

5 vim

12 ping

12 su

22 systemctl

40 arping

45 top

74 ssh

193 bash

200 abrt-dbus

248 anacron

1528 sshd

2364 sftp-server

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.1K Views

From the list, it looks like you might have a bunch of stuff mid-flight when the connection was disrupted? SSH is usually pretty good about resuming a connection. Alternatively, it could be whatever is interacting with sftp-server. sftp connections are recieved by sshd, but then passed to sftp-server processes to be handled. those sftp-server processes (and possibly the sshds tied to them?)

The real root cause may be that 361 day uptime though. I know that there have been kernel, systemd, and ssh updates produced within the last year. Even though you may have applied those to the system, it's clear that the system has not been restarted. At the very least, you're running a kernel which is unpatched compared to the most recent one [hopefully] installed on the box. I've seen situations where odd behavior occurs when the underlying software is updated, but the running daemons not restarted to utilize those updated binaries. For example, if you've been running systemd for 361 days, but an update to systemd updated a library, which is then loaded into your older, running systemd daemons, what happens? Is the older systemd daemon able to just roll with that library, or is something about it changed in such a way that it causes inconsistencies or, if you want the technical term, wonkiness, with your running systemd?

Whenever I see a system with a really large uptime value, I cringe. I mean, on the one hand, it's cool that the box has been runing that long without issue, but on the other, it means that updates (like kernels) haven't been completed. I would expect well cared for systems to max out at about 100 days of uptime. Anything beyond that will typically set off my spidy senses.

-STM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- 13.1K Views

Hi @Scott

Appologies for the delayed response. Seen and read your post with interest but could not reply proerly earlier.

You have cleary pointed out two issues and I am tempted to agree with you.

Issue 1 - SSH Connection got disruptted!

All the stale connections came from clients connecting over good but easily disruptable 3G wireless networks.

Issue 2 - 361 Day Uptime

It is a shame I did not take this into consideration and I was blameful of "systemd" and "kernel" for that matter.

I admit I should be more careful about how the system gets well maintained and cared for.

Thank you for sharing your hands on experience and important insights.

I will close this case and happily accept yours as solution :)

Thanks and best regards,

Will

Red Hat

Learning Community

A collaborative learning environment, enabling open source skill development.